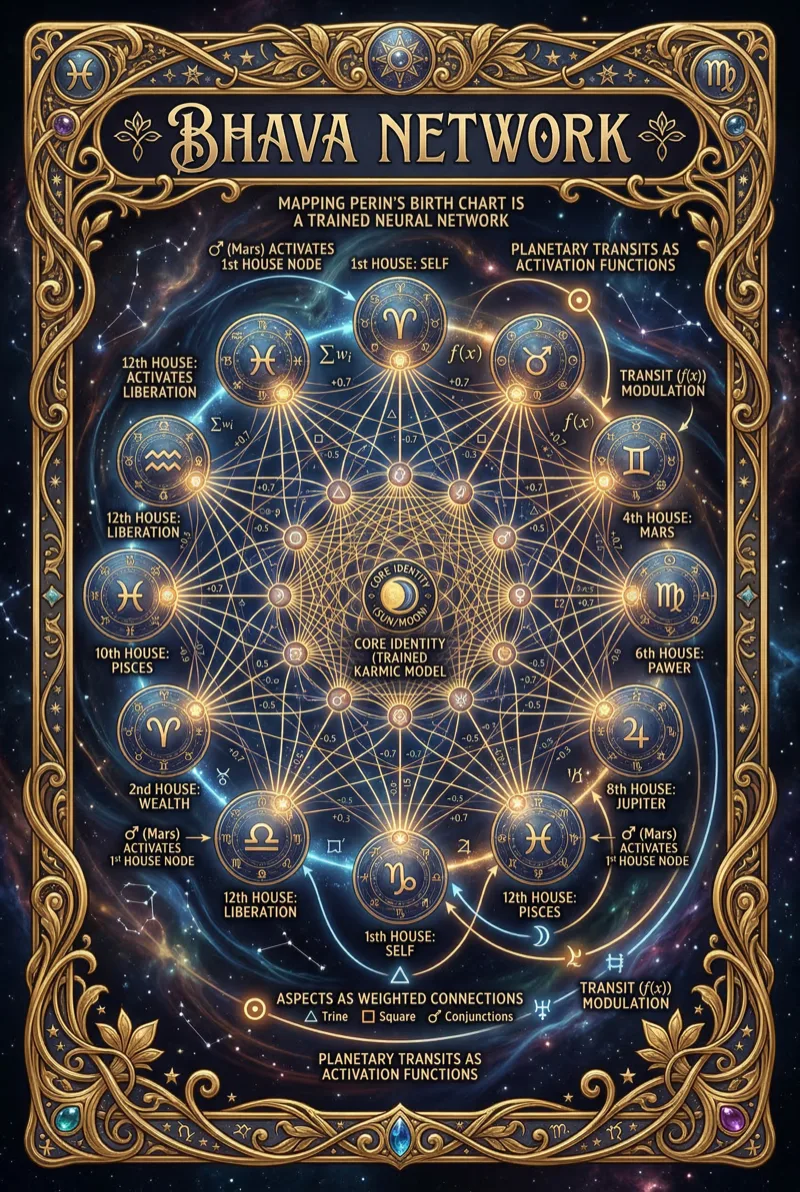

Bhava Aspects as Neural Networks: Weighted Connections in the House Graph

Runtime Version: 1.0.0

“A neural network learns by adjusting weights. A life learns by adjusting attention. The architecture is the same.” — Deep Learning Sutras

The Aspect Matrix as Adjacency Weights

In Vedic astrology, houses (bhavas) influence each other through aspects — geometric relationships determined by angular separation. The seventh house from any house receives a full aspect (weight 1.0). The fourth and tenth houses receive square aspects (weight 0.75). The fifth and ninth houses receive trine aspects (weight 0.85). These weights define the strength of informational flow between houses.

class BhavaAspectSystem:

def __init__(self):

self.houses = range(1, 13)

self.aspect_types = {

'full': 1.0,

'square': 0.75,

'trine': 0.85,

'opposition': 0.9

}

def create_aspect_matrix(self):

matrix = np.zeros((12, 12))

for house in self.houses:

opposite = (house + 6) % 12 or 12

matrix[house-1][opposite-1] = self.aspect_types['full']

square1 = (house + 3) % 12 or 12

square2 = (house + 9) % 12 or 12

matrix[house-1][square1-1] = self.aspect_types['square']

matrix[house-1][square2-1] = self.aspect_types['square']

trine1 = (house + 4) % 12 or 12

trine2 = (house + 8) % 12 or 12

matrix[house-1][trine1-1] = self.aspect_types['trine']

matrix[house-1][trine2-1] = self.aspect_types['trine']

return matrixThis produces a 12x12 weight matrix. If you have spent any time with neural networks, you recognize this immediately: it is the weight matrix of a single-layer neural network with 12 input neurons and 12 output neurons. The aspect weights are the connection strengths. The houses are the nodes. The planetary occupants are the activation values.

Network Architecture

A neural network consists of nodes (neurons), connections (synapses), and weights (connection strengths). The bhava system provides all three:

- Nodes: The 12 houses, each representing a domain of life experience

- Connections: The aspect relationships between houses

- Weights: The aspect strengths (1.0, 0.85, 0.75)

- Activation values: The planetary occupants and their individual strengths (Shadbala)

class BhavaNetwork:

def __init__(self, aspect_system):

self.aspect_matrix = aspect_system.create_aspect_matrix()

self.hidden_layers = [64, 32]

self.activation = 'relu'

def build_network(self):

model = tf.keras.Sequential([

tf.keras.layers.Dense(

self.hidden_layers[0],

activation=self.activation,

input_shape=(12,)

),

tf.keras.layers.Dense(

self.hidden_layers[1],

activation=self.activation

),

tf.keras.layers.Dense(12, activation='sigmoid')

])

weights = self.aspect_matrix.copy()

model.layers[0].set_weights([weights, np.zeros(12)])

return modelThe key insight is weight initialization. In modern deep learning, proper weight initialization is critical — Xavier initialization, He initialization, and other schemes prevent vanishing or exploding gradients during training. The bhava aspect matrix provides a pre-trained weight initialization derived from millennia of empirical observation. The network does not need to learn the connection strengths from scratch. They come pre-configured by geometric necessity.

Forward Propagation Through Houses

When a planet occupies a house, it activates that node with a strength proportional to its Shadbala. This activation propagates through the aspect matrix to all connected houses, weighted by the aspect strengths. The output of this forward pass is a 12-element vector representing the influence distribution across all houses.

def analyze_house_patterns(birth_chart):

aspect_system = BhavaAspectSystem()

network = BhavaNetwork(aspect_system)

patterns = generate_house_patterns(birth_chart)

labels = generate_influence_labels(birth_chart)

model, history = network.train_on_patterns(patterns, labels)

return {

'model': model,

'training_history': history,

'aspect_weights': model.layers[0].get_weights()[0]

}Consider Jupiter in the first house with strong Shadbala. The forward pass sends activation to the seventh house (full aspect, weight 1.0), the fifth and ninth houses (trine aspects, weight 0.85), and the fourth and tenth houses (square aspects, weight 0.75). The resulting influence pattern describes how Jupiter’s presence in the ascendant distributes benefit across the entire chart.

This is precisely how information flows through a trained neural network. An input activation propagates through weighted connections to produce an output pattern. The bhava network’s output pattern is the traditional astrological reading — which areas of life receive Jupiter’s beneficence, and in what proportion.

Backpropagation and Karmic Learning

In standard neural networks, backpropagation adjusts weights to minimize prediction error. The network learns by comparing its output to desired output and propagating the error signal backward through the weight matrix, adjusting each weight proportionally to its contribution to the error.

The Vedic system’s analog to backpropagation is the concept of karmic refinement. Life experiences (outputs) that deviate from dharmic alignment (desired outputs) generate an error signal that propagates backward through the bhava network, adjusting the effective weights of planetary influences. Remedial measures — mantras, gemstones, charitable acts — are manual weight adjustments applied to specific connections in the network.

This is not metaphor stretched to breaking. The mathematical structure is identical. A weight matrix transforms inputs to outputs. Error signals adjust weights. The system converges toward a configuration that minimizes dissonance. Whether you call the error signal “loss function” or “karmic debt,” the optimization algorithm is gradient descent.

Attention Mechanisms and Planetary Yogas

Modern transformer networks use attention mechanisms — learned functions that dynamically weight the importance of different inputs depending on context. The bhava network has a built-in attention mechanism: planetary yogas.

A yoga is a specific combination of planets in specific houses that produces effects greater than the sum of individual planetary influences. Gajakesari Yoga (Jupiter and Moon in mutual kendras) amplifies both planets’ influence beyond what their individual Shadbalas would predict. This is multiplicative attention — the co-occurrence of specific activations in specific nodes produces a nonlinear amplification that additive weight matrices cannot capture.

In transformer terminology, the yoga acts as a multi-head attention layer that sits between the input (planetary positions) and the weight matrix (aspect connections). It modulates the effective weights based on contextual relationships between activations — exactly what attention mechanisms do in natural language processing.

Graph Neural Networks and the Zodiac Graph

The bhava system is most naturally modeled not as a feed-forward network but as a Graph Neural Network (GNN). In GNNs, the topology of the graph — which nodes connect to which — is a fundamental part of the architecture. Messages pass between connected nodes, and each node updates its state based on aggregated messages from its neighbors.

The zodiac is a cyclic graph with 12 nodes and aspect-defined edges. Each node (house) sends messages to its aspected houses, weighted by aspect strength. Each house aggregates incoming messages and updates its activation state. This message-passing process, iterated over multiple rounds, produces a final state that integrates all planetary influences through all aspect pathways.

GNN message passing converges after a number of rounds equal to the graph’s diameter. The zodiac graph, with aspects creating shortcuts across the cycle, has an effective diameter of about 2 — meaning two rounds of message passing are sufficient to propagate information from any house to any other house. This is why Vedic astrology can assess any house’s condition by examining just the houses that aspect it: two hops in the graph reach everywhere.

The Pre-Trained Model

The bhava aspect network is a pre-trained model. Its weights were not learned through gradient descent on labeled data. They were learned through centuries of contemplative observation — the original training set was lived human experience, and the training algorithm was pattern recognition applied across thousands of birth charts and life outcomes.

Modern machine learning seeks to replicate this process computationally. But the seers had an advantage that algorithms lack: they were both the network and the trainer. They experienced the forward pass as lived life and the backpropagation as contemplative reflection. The resulting weight matrix — the aspect system — is a model trained on the most comprehensive dataset possible: the full spectrum of human existence.

This document is part of the Lorenz-Kundli Pattern Recognition series exploring mathematical-mystical parallels across the pattern space of consciousness.